Having recently relaunched a podcast on a project I am working on, the idea of adding deep links is something I hadn’t thought much about–until now.

Web development is funny like that. Sometimes you find a tool like this and then you get the idea, oppose to having an idea and needing to find the tool.

I have not tried to use it, yet, but I’m really thinking about it. This could be really interesting to use for sermon audio, too. Check it out:

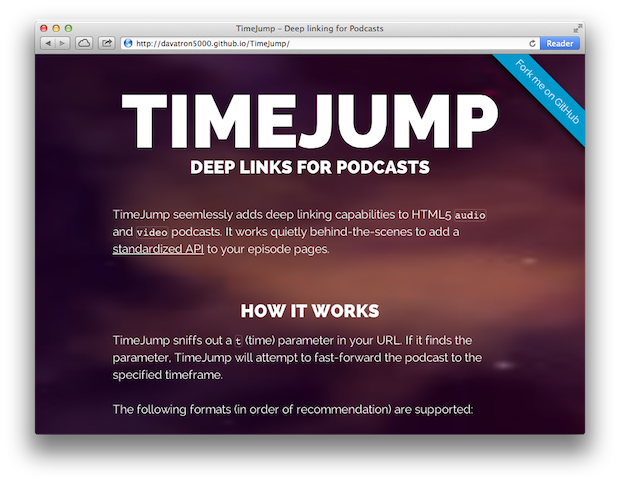

TimeJump

By digging into TimeJump, all you have to do is change up your link.

TimeJump sniffs out a t (time) parameter in your URL. If it finds the parameter, TimeJump will attempt to fast-forward the podcast to the specified timeframe.

So then your link would look something like this:

http://podcast.com/episode/#t=23:45

Imagine sermon notes or general podcast notes with a link directly to the mentioned text.

Yeah.

I dig it.

Learn more, download it, fork it on the Git, on the TimeJump website.

Cool idea. I’ll have to look at it for my podcasts.

Eric, hi.

I’m wondering if you’ve ever come across anything that would include concepts from TimeJump, but add more ideas as well.

I jotted out a quick sketch of what I’m looking for on my blog:

http://notes.natelawrence.net/transcriptsync/

The gist of it is that, given a chunk of audio or video and a full transcript of what was said therein combined with subtitle-style timecodes that correspond to different phrases in the text, I want to be able to present the transcript and the source media together and have the text auto scroll to display the part of the transcript that is currently being heard.

I tend to transcribe audio as thoroughly as possible on first pass (including stuttering, “um”s, “uh”s, rewordings, etc.) just to be sure that I’ve not missed anything.

I then make a copy which I proofread and clean up, trying to punctuate the original words to be as grammatically correct as possible and remove any repeated phrases to convey the speaker’s intended meaning and to provide a text that will translate well. This also includes correcting any slips of the tongue when someone is speaking (say someone is illustrating a point and says “Abraham” when they obviously meant to say “Moses” or accidentally say “Pacifically” rather than “specifically”)

This results in two transcripts, but I would like to be able to present the option to the viewer to toggle between these on the fly as the transcript plays.

My ideal is that I could simply mark up the proofread differences into the verbatim transcript and not have a duplicate copy of all the text they have in common.

This is good for two reasons:

1) File size/download time is cut by not redundantly storing the majority of the words twice.

2) The transcript player can keep visual continuity on the words that don’t change and simply animate the repeated phrases collapsing to the proofread replacement so that our viewers do not lose their place.

The transcript is good for translation.

The transcript is good for searching.

The transcript is good for hyperlinking to a specific point in the video.

The transcript is good for hyperlinking to references (as you mention).

The transcript is good for those who cannot hear or cannot hear well or where the video is not of sufficient quality to lip read or the video has cut away to illustrative material while the speaker continues.

Above and beyond these requirements:

:: I’m looking for a way to highlight multiple sections of a single time-synchronized-transcript, which could auto generate the appropriate timecodes and then present a playlist of selections within a single piece of audio or video.

:: I’m looking for a way to have a playlist of multiple audio + video clips (potentially from different websites) and retain the ability to play multiple selections of arbitrary length from within each of them.

(Imagine you have some audio journals and some video journals throughout a creative project and you want to play back all statements regarding a particular subject that is mentioned at least once in 75% of the recordings.)

:: I am looking for a way for the player to buffer ahead the specific selections of audio or video that we have specified that we will be playing.

:: It should always be made clear to the user, through text, that what they have listened to is only a portion of the whole.

:: I am looking for a way for the selections of audio + video to work on mobile devices and video game consoles without the operating system’s default media player intercepting the HTML5 audio or video and simply playing back the entire recording.

:: I am looking for a way (currently uploading a video to YouTube along with a uniquely formatted transcript is the only way that I know of) of automatically generating timecodes for the beginning of each word in a transcript.

My ideal is actually a timecode for the beginning and end of each syllable.

This gives us several things:

1) When we switch from the verbatim transcript to the proofread transcript, we could actually skip the pieces of audio that we have folded out of view, allowing us to cut out stammering, rephrasing, unnecessarily long pauses while a speaker reviews their notes, etc.

2) At this point, we can have the subtitles/captions/transcript print itself to screen on a per-character basis, synchronized with the speaker’s speech. We can thus visualize the speakers words materializing into existence on the page as they are spoken because we simply divide the amount of time that a syllable takes to play back by the number of characters in that syllable. This provides a satisfactory synchronization between a speaker’s nuances of speed of pronunciation whereas averaging the total number of letters in a word over the whole word may be too loose to be pleasant whenever one syllable is held for a longer period of time.

3) This gives us the ability to generate a timecode for every letter in the transcript to seek to any point in the media (within the constraints of the compressed format that the media is stored in).

4) It would require more markup, but we could actually animate someone forming their wording when they are rephrasing a sentence by use of crossed out text, deleting a phrase that will be replaced to complete the sentence, etc. My instinct, since I was in elementary school, was that this would help people think more clearly about grammar and punctuation.

My summary seems to have surpassed my original post in some ways and there are more ideas here than my core requirements which are thus:

For a single piece of audio/video on a webpage and a time-synced transcript I would like to:

1) Display them side by side, showing the text that is currently being spoken

(resynchronizing when the user seeks to a previous or future point in the recording).

The idea is that we can add our own transcript/subtitles for a video or audio that did not include one.

2) By clicking on a phrase within the transcript which corresponds to a segment of time, it will scrub the media player to that timecode (as TimeJump does on page load).

See the transcript below the video player at TED.com for an example.

http://www.ted.com/talks/blaise_aguera_y_arcas_demos_photosynth.html

3) Allow the user to stop the transcript’s auto scroll to search the text for a particular keyword + then seek to that time in the audio/video.

4) Allow the user to toggle off time hyperlinks in order to click on hyperlinks to external resources mentioned (a passage on Bible.com, a Wikipedia article, another church’s website, someone’s profile on Twitter, etc.). Perhaps it would suffice to hold down Ctrl and then click on a hyperlink embedded in the transcript to avoid seeking to that time in the media.

I have not.

^THIS^, however, is #EPIC.